| Home Page | Overview | Site Map | Index | Appendix | Illustration | About | Contact | Update | FAQ |

|

|

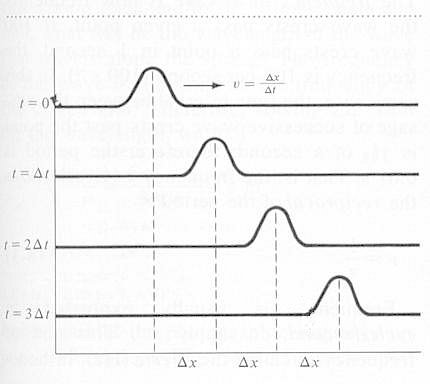

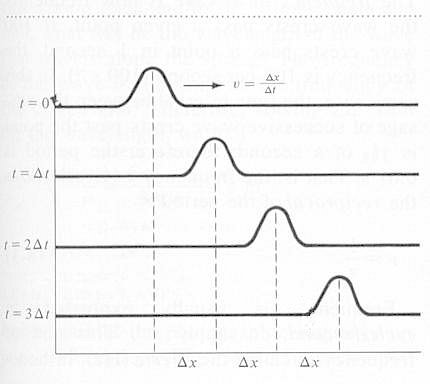

Wave motion is one of the most familiar of natural phenomena. When a medium, whether gas, liquid, or solid, is disturbed, the disturbance moves out in all directions until it encounters a boundary at which point it will either be absorbed, reflected, or refracted depending on the nature of the discontinuity. In reality, the wave would fade away gradually by damping in the medium. The physics of wave motion can be illustrated best in one dimension such as in a string. Figure 01 shows a pulse generated by a single up and down motion of the string. The pulse moves out as shown in successive time frames. Now if the up and down motion of |

Figure 01 Pulse |

Figure 02 Traveling Wave[view large image] |

the string is driven by a motor, it would generate a traveling wave in the form of the sine function as shown in Figure 02. |

, which is called the wavelength. The frequency of a wave is how frequently the wave crests pass a given point. If 100 wave crests pass a point in 1 second, the frequency is 100 cycle per second (it is sometimes expressed as 100 c/s, or 100 cps, or 100 Hz). The frequency

, which is called the wavelength. The frequency of a wave is how frequently the wave crests pass a given point. If 100 wave crests pass a point in 1 second, the frequency is 100 cycle per second (it is sometimes expressed as 100 c/s, or 100 cps, or 100 Hz). The frequency  and the period

and the period  is related by a simple formula:

is related by a simple formula:  = 1/

= 1/ ----------- (1)

----------- (1)

=

=  /

/ = v, ---------- (2)

= v, ---------- (2) of the medium by yet another formula:

of the medium by yet another formula: )1/2, ---------- (3)

)1/2, ---------- (3) | ---------- (4a) |

t) ---------- (4b)

t) ---------- (4b) = 2

= 2

,

k = 2

,

k = 2 /

/ , and A is the amplitude as shown in Figure 02. It can be shown that the cosine function in similar form as Eq.(4b) is also a solution for Eq.(4a) with the initial and boundary conditions of u = A at t =0 and x =0.

, and A is the amplitude as shown in Figure 02. It can be shown that the cosine function in similar form as Eq.(4b) is also a solution for Eq.(4a) with the initial and boundary conditions of u = A at t =0 and x =0. |

| If the vibrating string is attached to a rigid support at the other end, the traveling wave will be reflected and will begin to travel back toward the driven end. If the frequency of vibration is not properly chosen, the direct wave and the reflected wave will combine to produce a jumbled wave pattern. It is found that only a number of particular frequencies can produce regular patterns of motion along the string. At these frequencies, certain positions along the string remain stationary (the nodes) while the rest of the string vibrates with a constant |

Figure 03 Standing Wave, Animation[view large image] |

Figure 04 Standing Wave |

/2), ---------- (5)

/2), ---------- (5) o for the particular string. The higher frequencies with integer multiple 2

o for the particular string. The higher frequencies with integer multiple 2 o, 3

o, 3 o, and 4

o, and 4 o, ... are called harmonics or overtones. Usually, the dominant standing wave is the fundamental as shown in Figure 11a, which displays the proportion of the fundamental to the various harmonics for different kinds of musical instruments. Since Eq.(4a) is a linear differential equation, the sum of the separate solutions is also a solution. Thus, superposition of the the fundamental and harmonics can generate different kind of waveform as shown in Figure 05. Mathematically, it is expressed by the Fourier series f(x) with u = f(x) sin(n

o, ... are called harmonics or overtones. Usually, the dominant standing wave is the fundamental as shown in Figure 11a, which displays the proportion of the fundamental to the various harmonics for different kinds of musical instruments. Since Eq.(4a) is a linear differential equation, the sum of the separate solutions is also a solution. Thus, superposition of the the fundamental and harmonics can generate different kind of waveform as shown in Figure 05. Mathematically, it is expressed by the Fourier series f(x) with u = f(x) sin(n vt/L):

vt/L):

|

|

----- (6) ----- (6) |

Figure 05 Composite Wave [view large image] |

Figure 06 Fourier Series and Waveforms |

where f(x) is the maximum displacement of the wave at x, L= /2 and n = 1, 2, 3, ... Figure 06 depicts the various waveforms produced by the respective Fourier series. /2 and n = 1, 2, 3, ... Figure 06 depicts the various waveforms produced by the respective Fourier series. |

represents sum over all the variables with an index n. For example,

represents sum over all the variables with an index n. For example,  n2 = 12 + 22 + 32 + ... The integral sign

n2 = 12 + 22 + 32 + ... The integral sign  represents sum over a continuous variable x from x = -L to x = +L. A trivial example is

represents sum over a continuous variable x from x = -L to x = +L. A trivial example is  dx = 2L

dx = 2L

|

|

wave. The electromagnetic waves usually do not propagate in unidirection either, Figure 07b shows the radiation pattern for charge accelerated in its direction of motion. The "8" shape pattern (a no hole doughnut in 3 dimension) is emitted at low velocity, while the lobes (a thick cone in 3 dimension) are generated at speed close to the velocity of light. |

Figure 07a Line Broaden- ing [view large image] |

Figure 07b Radiation Pattern [view large image] |