| Home Page | Overview | Site Map | Index | Appendix | Illustration | About | Contact | Update | FAQ |

|

Thermodynamics provides a macroscopic description of matter and energy. It is a branch of physics developed in the 19th century at the beginning of the industrial revolution with the invention of the steam engine (Figure 01). It was driven by the need to have a better source and more efficient use of energy than the competitors (among English, French, and German). It was a case where technology drove basic research rather than vice versa. |

Figure 01 Steam Engine |

In modern physics, the heat Q represents "energy transfer" associated with the random motion of particles, while W is the "energy transfer" in organized motion of a collection of particles (Figure 02). |

|

|

Figure 03 Thermodynamic Entropy [view large image] |

|

This new variable is called "entropy" (en for within, trope for transformation in Greek) S = Q/T ---------- Eq.(6). The formulas in Eqs.(3) and (5) together make up the original form of the Second Law : The entropy of a close system tends to remain constant or to increase. |

Figure 04 |

Third Law : By writing Eq.(6) in differential form, i.e., dQ = TdS, it shows that dQ  0 as T 0 as T  0, from which this law states that it is impossible to attain a temperature of absolute zero (Figure 04) - a corollary of the 2nd Law. 0, from which this law states that it is impossible to attain a temperature of absolute zero (Figure 04) - a corollary of the 2nd Law. |

U =

U =  Q - W ---------- Eq.(7)

Q - W ---------- Eq.(7) U denotes the change of internal energy which can have many forms including kinetic energies of molecular translation, vibration, rotation, and potential energy of inter-molecular interaction (Figure 04). Its detail usually has no concern in thermodynamics.

U denotes the change of internal energy which can have many forms including kinetic energies of molecular translation, vibration, rotation, and potential energy of inter-molecular interaction (Figure 04). Its detail usually has no concern in thermodynamics. U is needed for book-keeping purpose - to balance the amount of heat and work;

U is needed for book-keeping purpose - to balance the amount of heat and work;  U = 0 in reversible process.

U = 0 in reversible process. |

(such as in microfluid, chemical reactions, molecular folding, cell membranes, and cosmic expansion) operate far from equilibrium, where the standard theory of thermodynamics does not apply. Figure 05 shows the cases for different kinds of thermodynamic theory. Case 1 is for over all equilibrium in the system, which is described by classical thermodynamics. Case 2 has local equilibrium in different regions. A theory of nonequilibrium thermodynamics (using the concept of flow or flux) has been developed for such situation. In case 3 the molecules become a chaotic jumble such that the concept of temperature is not applicable anymore. A new theory has been formulated by using a new set of |

Figure 05 Thermodynamics Theory [view large image] |

variables within the very short timescale for the transformation. The second law of thermodynamics has been shown to be valid for all these cases. (see more in "Non-equilibrium thermodynamics") |

n(Zn) --------- Eq.(9),

n(Zn) --------- Eq.(9), |

|

Figure 06 Macro/Micro-state [view large image] |

1/2(2mE)1/2V1/3)/h]3N/(N!)}(

1/2(2mE)1/2V1/3)/h]3N/(N!)}( E/E) --------- Eq.(10a)

E/E) --------- Eq.(10a) n[(

n[( 3/2(2mE)3/2V)/Nh3] + k

3/2(2mE)3/2V)/Nh3] + k n(

n( E/E) --------- Eq.(10b)

E/E) --------- Eq.(10b) n[(

n[( 3/2(3mkT)3/2V)/Nh3] + k

3/2(3mkT)3/2V)/Nh3] + k n(

n( T/T) --------- Eq.(10c)

T/T) --------- Eq.(10c) p = (2mE)1/2(

p = (2mE)1/2( E/2E) is the range of momentum transforming to the energy E,

E/2E) is the range of momentum transforming to the energy E, 3N/2(2mE)(3N-1)/2 comes from integrating up to the energy E = p2/2m,

3N/2(2mE)(3N-1)/2 comes from integrating up to the energy E = p2/2m, E/E ~ 0.1). The partition function is reduced to :

E/E ~ 0.1). The partition function is reduced to :  n[Z(N)] ~ Nk ---------- Eq.(12),

n[Z(N)] ~ Nk ---------- Eq.(12), S(N) ~ (Nf - Ni)k ---------- Eq.(13a),

S(N) ~ (Nf - Ni)k ---------- Eq.(13a), S(V) ~ Nk

S(V) ~ Nk n(Vf/Vi) ---------- Eq.(13b),

n(Vf/Vi) ---------- Eq.(13b), S(T) ~ Nk

S(T) ~ Nk n(Tf/Ti) ---------- Eq.(13c).

n(Tf/Ti) ---------- Eq.(13c). E,

E,  S(

S( E) ~ k

E) ~ k n(

n( Ef/

Ef/ Ei) ---------- Eq.(13d).

Ei) ---------- Eq.(13d). E or

E or  T.

T. n(Z) ---------- Eq.(14),

n(Z) ---------- Eq.(14), n{[(2mE)1/2V1/3)/h]3/N} --------- Eq.(15a)

n{[(2mE)1/2V1/3)/h]3/N} --------- Eq.(15a)  = Z, i.e., the partition function mentioned above).

= Z, i.e., the partition function mentioned above).

|

|

|

The generalization has degenerated to merely a qualitative descriptor (Figure 09a). For example, the books neatly cataloged on the bookshelf is considered to have lower entropy, while those scattering around on the table has higher entropy. |

Figure 08 Entropy of Pair of Dice |

Figure 09a |

Figure 09b Order and Disorder of Books [view large image] |

Such loose association has now linked entropy to mean order/disorder for most laymen (Figure 09b). |

|

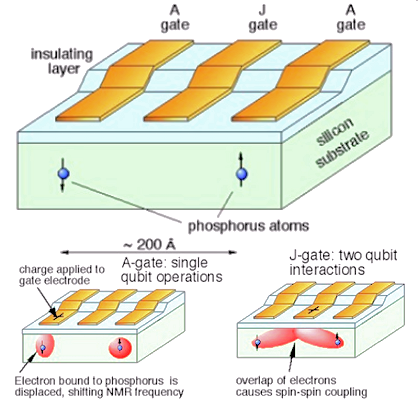

Information is a concept first used to resolve the paradox of Maxwell's demon. About 150 years ago, the physicist J. C. Maxwell came up with an intriguing idea. He conceived a thought experiment, in which a little demon who operates a friction-free trap door to separate air molecules of one type from the other (see Figure 10), and finally arrives at a system with lower entropy. Such organizing ability of Maxwell's seems to violate the second law of thermodynamics as the demon only selects molecules but does no work. This paradox kept physicists in suspense for half a century until Leo Szilard showed that the demon's stunt really isn't free of charge. By selecting a molecule out of the alternative of 2 types, he creates something called information, which produces an amount of entropy (through mental processing in the brain, see Integrated Information Theory of Consciousness) exactly offsetting the decrement in the re-arrangement. The unit of this commodity is bit, and each time the demon chooses a molecule to shuffle, he shells out one bit of information for this cognitive act, precisely balances the thermodynamic accounts. The new concept has since shown its usefulness in communication and computer, but perhaps |

Figure 10 Maxwell's Demon [view large image] |

its greatest power lies in biology, for the organizing entities in living beings - the proteins and certain RNAs - are but the demon's trick in reverse. |

n(x)/

n(x)/ n(2) = 1.44

n(2) = 1.44 n(x).

n(x). |

In term of lottery draw, you become rich, if you somehow know the winning number out of the Z = N = 13983816 (for Lotto 6/49, Figure 11) combinations (arrangements) before hand, i.e., you get a lot of information. On the other hand, there is very little information, when you randomly pick a ticket. In this example, information is related to the probability p = n/N, and I = log2(p). The choice is unique and contains lot of information for n = 1, while n = N for a random pick is certainly among the combinations but may not be the winning one. Since the probability is always equal to or less than 1, I is negative by definition. There is no need to place a minus sign in front. The original formulation of information made use of probability in binary choice as explained in the following. |

Figure 11 Probability in Lottery [view large image] |

Essentially, the alternate definition just turns the number of arrangement upside down, i.e., turning N to 1/N in the formulation albeit including some refinements. |

|

---------- Eq.(18a), ---------- Eq.(18a),where pi = ni/N is the probability of finding the subject (the "ace" in the example) among ni in the partition, N is the total number in the sample. For the special case where the partitions has equal number of members as in Figure 12, Ii = log2(pi) ---------- Eq.(18b) |

Figure 12 Information, Definition [view large image] |

Note that I8 = log2(1/N) = -3 for an unique choice as shown in Figure 12. |

10-16 erg/K, which is very small comparing to the entropy generated in raising 1 gram of water by 1oC at room temperature (27oC), i.e.,

10-16 erg/K, which is very small comparing to the entropy generated in raising 1 gram of water by 1oC at room temperature (27oC), i.e.,  S = 1.4x10-8 erg/K.

S = 1.4x10-8 erg/K. |

continuous thermal jostling of molecules forever. Thus, to maintain its highly non-equilibrium state, an organism must continuously pump in information. The organism takes information from the environment and funnels it inside. This means that the environment must undergo an equivalent increase in thermodynamic entropy; for every bit of information the organism gains, the entropy in the external environment must rise by a corresponding amount. In other word, information is just the flip side of entropy; while entropy is related to the number of microstates, information would specify only one (sometimes many) microstate out of such configuration. The process just turns the demon's trick around to infuse information by lowering entropy. |

Figure 13 Life Cycle [view large image] |

|

|

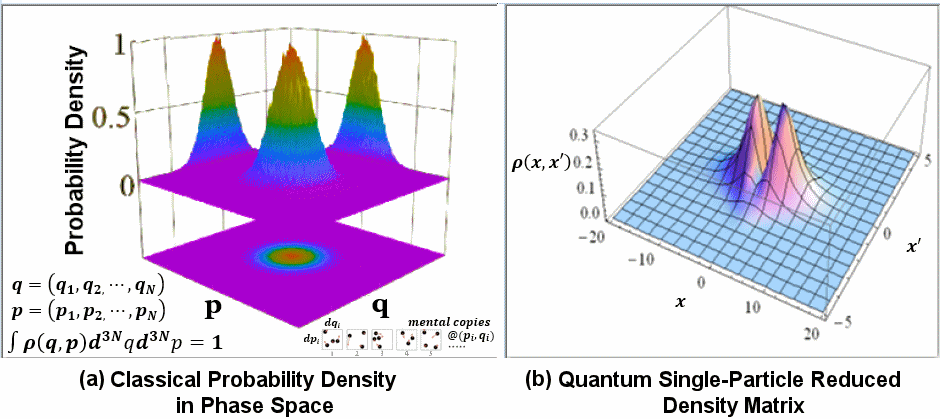

Figure 14 Density Matrix [view large image] |

|

and x in

and x in  spin state respectively (see Figure 15). Then

spin state respectively (see Figure 15). Then  is just the outer product of the spin state, i.e.,

is just the outer product of the spin state, i.e.,  =

=

.

. |

|

Figure 15 Superposition of Spin [view large image] |

The von Neumann entropy S( ) is the quantum version of the "Shannon's Measure of Information" : ) is the quantum version of the "Shannon's Measure of Information" :SMI = -  i pilog2(pi) (also see Eq.(18a)), i.e.,

S( i pilog2(pi) (also see Eq.(18a)), i.e.,

S( ) = -Tr[ ) = -Tr[  n( n( )], where Tr is the trace of the matrix. )], where Tr is the trace of the matrix. |

|

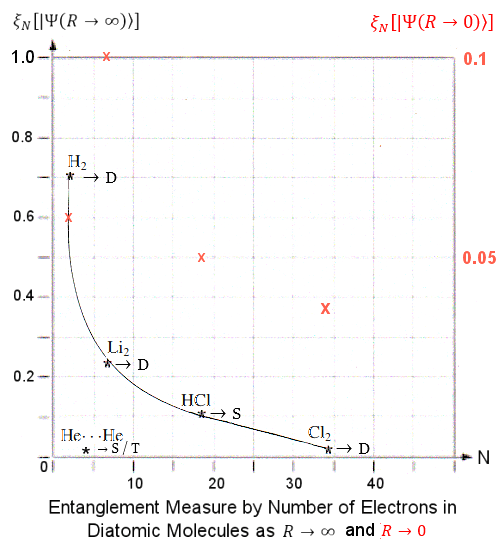

Entanglement Measures for the electrons in 5 diatomic molecules has been published in a 2011 paper entitled "Quantum Entanglement and the Dissociation Process of Diatomic Molecules". The post-Hartree�Fock computational method is employed to calculate the wave function  and ultimately and ultimately  N as function of the inter-atomic distance R.

Figure 16 shows the Entanglement Measures N as function of the inter-atomic distance R.

Figure 16 shows the Entanglement Measures  N between an electron with the rest in 5 different N between an electron with the rest in 5 different

|

Figure 16 Entanglement of Electrons in Diatomic Molecules [view large image] |

diatomic molecules as function of inter-atomic distance R. Figure 17 is a graph to show the limiting cases of  N as R N as R  0 and 0 and  (at different scale in ratio of 0.1/1). (at different scale in ratio of 0.1/1). |

|

|

It has been shown previously that the entanglement measure is expressed in term of the von Neumann Entropy S( ). Disorder is the usual notion on entropy (von Neumann and otherwise). A more useful interpretation in the current context would be in term of "multiplicity", which is the number of different arrangements that can arrive at a same configuration (state).

Thus, the general trend of increasing entanglement measure for large R (as shown in Figure 16) can be understood as increasing entropy with larger volume. However, it could not explain the bump near the united atom limit for some of the molecules. ). Disorder is the usual notion on entropy (von Neumann and otherwise). A more useful interpretation in the current context would be in term of "multiplicity", which is the number of different arrangements that can arrive at a same configuration (state).

Thus, the general trend of increasing entanglement measure for large R (as shown in Figure 16) can be understood as increasing entropy with larger volume. However, it could not explain the bump near the united atom limit for some of the molecules. |

Figure 17 |

Figure 18 |

Figure 17 also shows that entanglement measure decreases rapidly as the number of electrons increases. Such trend may be related to the sharing of entanglement between electrons. Entanglement is at its maximum with monogamy (such as the case with the H2 molecule), shared entanglement is called polygamy which produces weaker entanglement with more partners (see "Degree of Entanglement"). |

|

Although the Second Law of Thermodynamics dictates that entropy trends to increase relentlessly, we see orderly structures around from galaxies to houses (Figure 19). Such regular features are derived from the special property of nature and human. It is the attractive force such as gravity and electro-magnetics in nature and the information (meaning work) by human that |

Figure 19 Orderly Structures |

have reversed the Second Law "locally" at the expense of the larger environment. Followings are some examples to show the cause and consequence. |

|

|

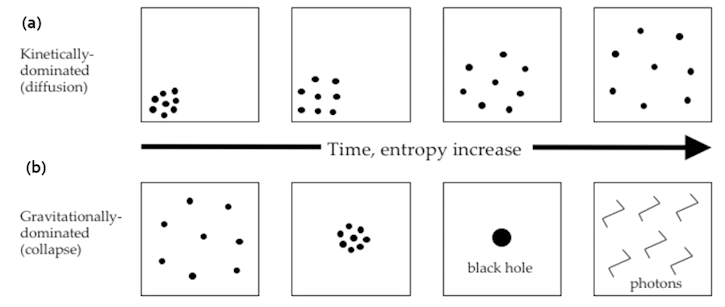

Figure 20 Entropy Evolution [view large image] |

Figure 20,a shows the evolution of entropy in free space with diffusion of particles. In Figure 20,b the system is collapsing to black hole by the pull of gravity, the increase in entropy is via the Hawking radiation. Meanwhile the entropy of the black hole is reduced to at most three bits : |

|

| provide the static force, that keeps it in place. Figure 21a illustrates the connection of entropy with randomness once the support of orderliness is removed by implosion in this case. All man-made structures would slowly crumble down with the ravage of time by thermal bombardment and chemical interactions with air and water (Figure 21b). |

Figure 21a Rise of Entropy by Implosion [view large image] |

Figure 21b Great Wall |

|

Such requirement is fulfilled by re-transmission of lower frequency radiation back to the cold dark space as illustrated in the figure. The process re-emits more entropy (at lower frequency) back to the space. By Boltzmann's definition of entropy, it has the effect of enlarging the phase space volume and hence returning more entropy to space than receiving from the sun in accordance with the second law of thermodynamics. Ultimately, it is the stars which generate the carbon atoms (see "Origin of Elements"), and the Sun which supplies energy to all life on Earth. |

Figure 22 Entropy and Life [view large image] |

|

|

|

Figure 23 Evolution of Cosmic Entropy [view large image] |

Figure 24 Initial Cosmic Entropy [view large image] |

T4; since in term of the size of the universe R, T

T4; since in term of the size of the universe R, T  1/R, dV

1/R, dV  R2dR, thus dS

R2dR, thus dS  dR/R and the entropy S

dR/R and the entropy S  log (R).

log (R). dU/T will increase until space is nearly empty in attaining the highest entropy state.

dU/T will increase until space is nearly empty in attaining the highest entropy state.